Quick Takeaways

- LED Volumes replace green screens with real-time 3D environments.

- The workflow relies on a "brain" (usually a game engine) to render backgrounds.

- Camera tracking ensures the perspective of the digital world shifts as the camera moves.

- Lighting is unified because the screens actually emit light onto the actors.

The Core Concept of the LED Volume

At its simplest, LED Volume is a massive, wraparound screen made of high-density LED panels that display a 3D environment in real-time. Unlike a flat TV screen, a volume usually consists of a curved wall and a ceiling, creating a seamless "bucket" of light and imagery. This setup allows for ICVFX (In-Camera Visual Effects), meaning the background you see on the monitor is the final shot, or very close to it.

Why does this matter? Because green screens are a nightmare for reflections. If you're filming a character wearing a chrome helmet, a green screen will leave a green tint on the visor that takes hundreds of hours to "paint out" in post. With an LED Volume, the helmet reflects the actual digital sunset or city skyline happening behind the actor. It's a fundamental shift from "fixing it in post" to "creating it in pre.

The Engine Driving the Image

You can't just play a movie file on these screens. If you did, the perspective would be wrong the moment the camera moved. To solve this, volumes use Unreal Engine, a real-time 3D creation tool originally built for video games. The engine renders the environment at 60 frames per second or higher, adjusting the image instantly based on where the camera is pointed.

This process is called "frustum rendering." The engine doesn't render the entire 360-degree world at full resolution. Instead, it creates a high-detail "inner frustum"-a narrow slice of the image that exactly matches the camera's field of view. Everything outside that slice is rendered at a lower resolution to save computing power. As the camera pans right, the high-res slice moves with it, keeping the illusion perfect for the viewer.

| Feature | Green Screen (Chroma Key) | LED Volume (ICVFX) |

|---|---|---|

| Lighting | Simulated in post-production | Real-time emission from screens |

| Actor Experience | Acting against a blank wall | Immersed in the actual environment |

| Post-Production | Heavy compositing and rotoscoping | Significant reduction in matte work |

| Flexibility | Change background easily in post | Requires "Virtual Scouting" in pre-production |

The Role of Camera Tracking

For the 3D background to feel real, the computer needs to know exactly where the camera is in physical space, down to the millimeter. This is handled by Camera Tracking systems. These systems use sensors-either infrared markers on the camera rig or internal gyroscopes-to feed spatial data back to the rendering engine.

If the camera moves forward by two inches, the Unreal Engine shifts the perspective of the digital mountains by exactly two inches. Without this, the image would look like a flat painting, and the "parallax effect" (where objects closer to the camera move faster than objects far away) would be gone. This synchronization happens in milliseconds, preventing any visible lag between the physical move and the digital update.

The Pre-Production Pivot: Virtual Scouting

In a traditional shoot, you might decide the exact look of a mountain range while editing. In an LED Volume, that's too late. You have to move the heavy lifting to the beginning of the project through Virtual Scouting. This is where the director and cinematographer put on a VR Headset and literally walk through the digital set before it's ever projected on the LED walls.

During this phase, they can move mountains, change the time of day, or adjust the placement of a digital building. They save these "virtual cameras" as presets. When they get to the actual stage, they just click a button, and the LED Volume snaps to that exact composition. It turns the director into a digital architect, allowing them to plan lenses and angles with mathematical precision.

Technical Hurdles and Pitfalls

It sounds like a magic wand, but LED volumes have their own set of headaches. The biggest is the "Moire effect." This happens when the camera's pixel grid clashes with the LED panel's pixel grid, creating weird, shimmering patterns on the screen. To avoid this, cinematographers have to be very careful with focus and distance; if the camera is too close to the wall or the focus is too sharp on the background, the pixels become visible.

Then there's the issue of color temperature. LEDs emit a specific spectrum of light that might not perfectly match the skin tones of your actors. Lighting technicians often have to add "fill lights"-traditional softboxes or LEDs-to bridge the gap between the screen's light and the physical reality of the scene. If you rely 100% on the volume for lighting, your actors can sometimes look a bit "flat" or unnaturally saturated.

The Future of In-Camera VFX

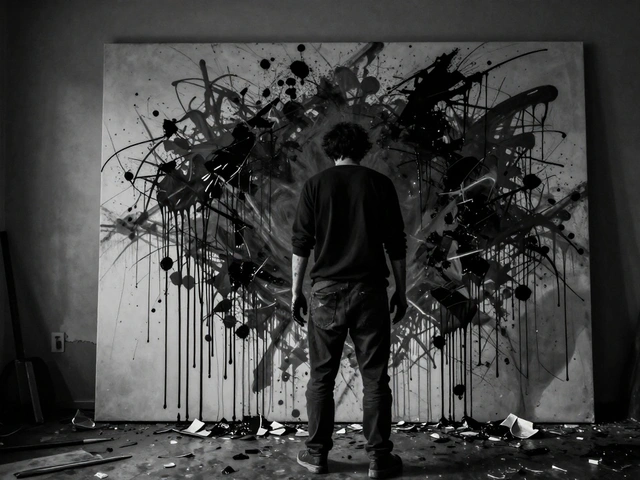

As we move toward 2026, we're seeing the rise of "hybrid volumes." These combine smaller LED arrays with traditional projection mapping to lower costs. We're also seeing better integration with AI-generated environments, where a director can describe a scene-"a neon-lit Tokyo street in the rain"-and the engine generates the 3D assets in real-time rather than having an artist spend weeks modeling every trash can and streetlight.

The ultimate goal is the total disappearance of the "set." We're heading toward a world where the physical location is just a blank canvas, and the entire visual narrative is crafted in a digital space that reacts to the human elements in the room. It doesn't replace the craft of cinematography; it just gives the cinematographer a digital paintbrush that works in real-time.

Does an LED Volume replace the need for a VFX team?

No. It just moves the VFX team's work from post-production to pre-production. You still need artists to build the 3D assets, lighting experts to tune the Unreal Engine scenes, and technicians to manage the hardware. The difference is that their work is seen on set rather than months later in a dark editing suite.

Can you use any camera with an LED Volume?

Yes, but the camera must be compatible with a tracking system. Whether it's an Arri Alexa or a RED camera, you need to attach a tracking puck or use an internal system that can tell the LED volume exactly where the lens is positioned in 3D space.

What is the biggest advantage over a green screen?

The primary advantage is the light. LED screens emit light, which creates natural reflections on metallic or glossy surfaces and provides a consistent light source for the actors' skin. This eliminates the "green spill" associated with chroma keying and makes the final composite look far more organic.

Is the LED Volume too expensive for indie filmmakers?

Full-scale volumes are expensive, but "mini-volumes" and LED walls for product photography are becoming accessible. Many indie creators now rent time at mid-sized stages or use smaller LED panels for specific shots, rather than building a full wraparound environment.

What is "Moire" and how do you fix it?

Moire is a visual interference pattern caused by the camera sensor capturing the grid of LED pixels. It's usually fixed by adjusting the distance between the camera and the screen, changing the focus (slightly softening the background), or using specific lens filters to blend the image.