The Core of the Magic: Real-Time Rendering

At the heart of this shift is Real-Time Compositing is the process of combining multiple visual elements-such as live-action footage and computer-generated imagery-instantly during production. Unlike traditional rendering, which can take hours for a single frame, real-time systems produce images at 24 to 60 frames per second. This means the director sees a high-fidelity approximation of the final movie while the camera is still rolling.

To make this happen, filmmakers use Game Engines. These are software environments designed to render complex 3D worlds for players in real time. By repurposing them for film, we move the heavy lifting of visual effects from the end of the pipeline to the beginning. If a mountain in the background looks too tall or the lighting is too harsh, the digital artist can change it in seconds, and the director sees the result immediately on their monitor.

Quick Takeaways

- Real-time compositing removes the guesswork from VFX-heavy shoots.

- Game engines provide the horsepower needed to render 3D environments instantly.

- It allows for better actor performances by replacing green screens with visual cues.

- Lighting is synchronized between the digital world and the physical set.

Turning Game Engines into Film Sets

When we talk about game engines in film, we're usually talking about Unreal Engine or Unity. While Unity is great for smaller-scale interactive projects, Unreal Engine has become the industry standard for high-end cinematography due to its Lumen global illumination system, which simulates how light bounces off surfaces realistically.

The workflow generally follows a specific path. First, the 3D environment is built in the engine. Then, that environment is linked to the camera's position using Camera Tracking technology. Whether it's an optical system or an inertial sensor, the game engine knows exactly where the physical camera is in the room. As the camera moves, the digital background shifts in perfect perspective, creating a seamless illusion of depth.

| Feature | Traditional VFX | Real-Time Compositing |

|---|---|---|

| Visual Feedback | Green screen / Imagination | Instant visual representation |

| Lighting Alignment | Estimated in post-production | Synchronized via DMX/LEDs |

| Iterative Speed | Weeks (Render cycles) | Seconds (Real-time updates) |

| Actor Experience | Acting against a void | Immersive digital environments |

The Rise of the LED Volume

The most impressive application of this tech is the LED Volume. Instead of a green screen, the actors are surrounded by massive walls of high-resolution LED panels. These panels display the game engine's render in real time. This is the backbone of Virtual Production.

Why is this better than a green screen? Lighting. In a traditional setup, you have to fake the reflections of a sunset on an actor's helmet using a gold reflector. In an LED Volume, the sunset is actually emitting light onto the actor. The reflections are real, the shadows are natural, and the skin tones look correct because they are being hit by the actual colors of the digital environment.

However, it isn't without pitfalls. If the resolution of the LED wall is too low, you get a "moiré effect"-those weird shimmering lines that appear when a camera films a screen. To avoid this, cinematographers have to be extremely precise with their focal length and distance from the wall. It's a delicate dance between the digital art and the physical lens.

Solving the 'Parallax' Problem

One of the biggest hurdles in on-set visualization is parallax. Parallax is the way objects at different distances move across your field of vision at different speeds. If you just put a flat image behind an actor, it looks like a cardboard cutout.

Real-time compositing solves this by using a "frustum." The frustum is the specific slice of the 3D world that the camera can see. The game engine only renders the high-resolution version of the scene inside that frustum, and it updates the perspective 60 times a second. If the camera dollies left, the background shifts perfectly to match. This gives the image a sense of physical presence and scale that simply doesn't exist in traditional 2D matte paintings.

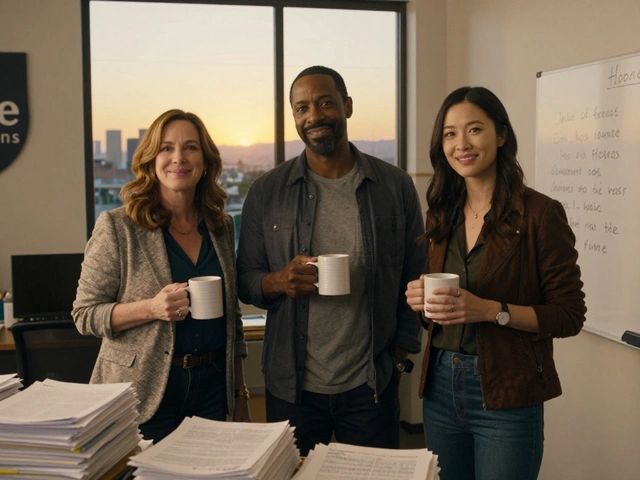

The Human Element: Actors and Directors

We often talk about the tech, but the real win is the psychological impact on the crew. Ask any actor if they prefer a green screen or a digital forest, and they'll pick the forest every time. When an actor can see the horizon, the scale of the mountains, and the atmospheric fog, their performance changes. They aren't just reciting lines; they are reacting to a space.

For the director, it's about confidence. You no longer have to say, "Just pretend there's a spaceship here." You can see the spaceship. You can decide that the ship is too close to the actor and move it three feet to the left in the software. This eliminates a massive amount of anxiety and reduces the number of wasted shots. You're essentially directing the final image, not a placeholder.

Practical Steps for Implementing On-Set Visualization

You don't need a multimillion-dollar Mandalorian-style stage to start using these tools. Many indie productions are using a "hybrid" approach. Here is a simple path to getting started:

- Pre-viz in Unreal Engine: Build your sets digitally before you even hire a crew. This helps you plan your camera moves and lighting needs.

- Use a "Virtual Camera": Use an iPad or a specialized tracking device to move a camera inside the game engine. This allows you to scout the digital set and find the best angles.

- Real-time Monitoring: Set up a screen on set that shows the live camera feed composited over the game engine background. Even a rough version is better than a green screen.

- DMX Integration: Connect your physical lights to the game engine. When the digital sun sets in the engine, your physical studio lights can dim automatically to match.

Future Outlook: Beyond the LED Wall

As we move toward 2027, the line between production and post-production will likely vanish entirely. We're seeing the emergence of AI-driven textures that can change the weather or the time of day in a scene based on a simple voice command. Imagine telling the engine, "Make it rain more heavily in the background," and having it happen instantly while the actor is still in the shot.

We are also seeing a shift toward Neural Radiance Fields (NeRFs), which allow us to turn real-world photos into 3D game environments almost instantly. This means we can scan a real location in Italy and bring it into the studio in Los Angeles with pinpoint accuracy, allowing for real-time compositing of actual locations without the cost of travel.

Is real-time compositing as high-quality as traditional rendering?

For on-set visualization, yes. While the 'final' cinema render in a traditional pipeline might be slightly more polished in terms of ray-tracing, the gap is closing. Many productions now use the real-time render as the final shot (this is called 'final pixel'), especially when using high-end LED volumes.

Do I need a supercomputer to run these game engines?

You need a powerful GPU, typically from the NVIDIA RTX series, to handle the real-time lighting calculations. However, for basic on-set visualization, a high-end gaming workstation is often sufficient. For full-scale LED volumes, clusters of synchronized servers are used to keep the image seamless.

What is the biggest drawback of using an LED Volume?

The cost and the "moiré" effect. LED walls are expensive to build and maintain. Additionally, if the camera gets too close to the screen or uses a specific zoom, the physical pixels of the LED wall can become visible in the shot, which requires careful lens management.

Can this technology be used for indie films?

Absolutely. While LED walls are pricey, using a game engine for pre-visualization and real-time monitoring on a green screen is accessible to anyone with a decent PC. It allows indie filmmakers to plan their shots better and reduce expensive mistakes during the shoot.

How does camera tracking actually work?

It uses a mix of sensors. Some systems use infrared markers on the ceiling and the camera to track position, while others use internal gyroscopes and accelerometers (IMUs). This data is sent to the game engine, which moves the virtual camera to match the real one's movement in real-time.

Next Steps for Filmmakers

If you're a director or DP, start by downloading Unreal Engine. It's free to start and has a massive library of assets in the Marketplace. Try building a simple room and moving a camera through it. If you're a producer, look into the cost-benefit of "virtual scouting"-using a digital version of your set to plan shots-which can save thousands in crew hours and equipment rentals.

The goal isn't to replace traditional filmmaking, but to give us a better window into the final product. The more we can see on set, the more we can focus on the thing that actually matters: the story and the performance.