Think about the last time you watched a movie where characters walked through a storm on Mars, or a dragon flew over a city that didn’t exist. Those scenes weren’t shot on location or built with giant sets. They were filmed on a soundstage - with a giant curved wall of LEDs glowing behind the actors. This isn’t science fiction anymore. It’s happening right now, on sets across Hollywood and beyond. And it’s changing how movies are made - for good.

What Are LED Walls in Film Production?

LED walls, also called volume stages or LED volumes, are massive screens made of high-resolution LED panels that surround actors and sets. These aren’t your average TV screens. They’re built for film: 8K resolution, 1,000 nits of brightness, and refresh rates fast enough to avoid motion blur under high-speed cameras. The content on these walls isn’t static. It’s rendered in real time using game engines like Unreal Engine, reacting to camera movement, lighting, and even weather conditions.

Before LED walls, green screens were the standard. Actors performed in front of a flat green backdrop, and VFX artists added backgrounds later. But green screens had problems. Reflections on costumes, mismatched lighting, and awkward shadows made it hard to sell the illusion. Plus, actors had to imagine the world around them - which often showed in their performances.

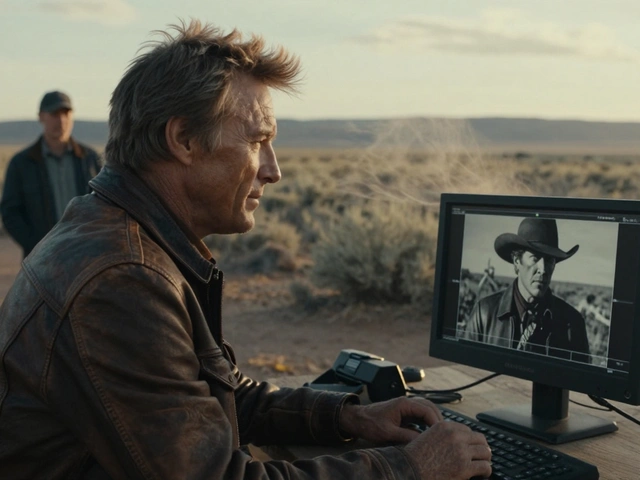

LED walls fix that. The environment is real, visible, and interactive. A character’s eyes reflect the glow of a sunset on the wall. A car’s paint catches the light of a virtual city. The crew can see exactly what the camera will capture - no guesswork.

In-Camera VFX: Seeing the Final Shot as You Shoot It

In-camera VFX means the final visual effect is captured live during filming. No post-production compositing. No blue screens. Just pure, real-time rendering that becomes part of the footage the moment the camera rolls.

This isn’t magic. It’s a combination of three things: LED volume technology, real-time rendering engines, and camera tracking. A camera-mounted sensor tells the system exactly where the camera is in 3D space. The engine then adjusts the background in real time to match the perspective. If the camera pans left, the virtual city shifts left. If the camera tilts up, the sky rises. It’s like having a living backdrop that moves with the camera.

Shows like The Mandalorian proved this works. In season one, the team used a 20-foot-tall, 270-degree LED volume to simulate alien landscapes. The actors saw the environment. The director saw the final shot. The lighting on the actors matched the virtual sun. And the whole thing was captured in-camera. No green screen cleanup. No hours of rotoscoping. Just clean footage, ready for color grading.

Why This Beats Traditional Green Screen

Green screens still work. But they’re outdated for high-end productions. Here’s why LED walls are winning:

- Real lighting: The LED wall emits actual light. It hits the actors’ skin, clothes, and props. That means no fake shadows or mismatched color temperatures.

- Immediate feedback: Directors and cinematographers can adjust shots on the fly. If the sunset looks too orange, they tweak the render in seconds.

- Faster editing: With in-camera VFX, you don’t need to wait weeks for VFX teams to render backgrounds. The shot is done when you wrap.

- Better performances: Actors react to real environments. They don’t have to guess where the dragon is or how the wind blows. Their emotions feel more authentic.

One director on a recent sci-fi film told his crew: "We didn’t shoot a green screen. We shot a world." That’s the shift.

The Technology Behind the Scenes

LED walls don’t work alone. They’re part of a bigger system:

- Unreal Engine: The most common real-time rendering engine used. It’s built for games, but it’s now the go-to for film. It handles lighting, physics, and animation in real time.

- Camera tracking systems: Companies like Vicon and Mo-Sys use infrared markers and optical sensors to track camera position and rotation with sub-millimeter accuracy.

- Content pipelines: Artists create 3D environments in tools like Maya or Blender, then export them into Unreal. These environments are optimized for real-time rendering - no high-poly models or slow textures.

- Lighting calibration: Every LED panel is individually calibrated for color and brightness. If one panel is too dim, it creates a visible seam. Teams spend days tuning the wall to match the virtual sun’s intensity.

It’s not cheap. A full volume stage can cost $5 million to build. But it’s cheaper than flying to Iceland for a week, building a physical set, or paying for 12 weeks of VFX work.

Real Examples: What’s Been Shot This Way?

Here’s what’s been done with LED walls and in-camera VFX:

- The Mandalorian (Disney+): The first major TV show to use this tech. Used a 270-degree LED volume. Saved millions on location shoots.

- Avatar: The Way of Water (2022): Used LED walls for underwater scenes. Actors saw virtual coral reefs and glowing sea creatures while performing.

- Obi-Wan Kenobi (Disney+): Filmed entire planet scenes on LED walls. No green screen on set.

- The Batman (2022): Used LED volumes for Gotham City night scenes. The reflections on the Batmobile’s surface were real.

- Star Wars: Skeleton Crew (2024): Shot in a new 180-foot-wide LED volume. One scene had a character walking through a collapsing alien city - all rendered live.

These aren’t gimmicks. They’re production choices. And studios are switching because the results are better - and faster.

What About the Crew? Is It Hard to Learn?

It’s a new skill set. Cinematographers used to work with natural light, gels, and flags. Now they work with render settings, exposure values, and color grading in a game engine. But it’s not as hard as it sounds.

Most crews learn in a few weeks. The biggest shift? Thinking like a game developer. Instead of asking, "How do we light this?" they ask, "What’s the light source in the virtual environment?" Then they match it.

Camera operators now work with motion tracking systems. Focus pullers need to understand depth maps. Gaffers check LED brightness levels instead of just wattage. It’s not about replacing old skills - it’s about adding new ones.

Limitations and Pitfalls

LED walls aren’t perfect. Here’s where they struggle:

- Resolution limits: Even 8K panels can’t match the detail of a real landscape. If the camera zooms in too close, you’ll see pixelation.

- Reflection issues: Highly reflective surfaces (like glass or wet pavement) can show seams or flicker if the LED content doesn’t match the camera angle.

- Cost of entry: A full volume is out of reach for indie films. But smaller setups - 10x10-foot walls - are now available for rent.

- Over-reliance: Some directors use LED walls just because they’re trendy. But if the environment isn’t well-designed, it looks fake.

The best results come when the virtual environment is treated like a real location. Lighting matters. Weather matters. Movement matters. It’s not a backdrop - it’s a character in the scene.

The Future: What’s Next?

LED walls are just the beginning. In 2026, we’re seeing:

- AI-generated environments: Directors describe a scene in words - "a jungle at dusk with glowing vines and distant thunderstorms" - and AI builds it in seconds.

- Mobile LED stages: Companies are building trailer-mounted volumes. You can shoot a spaceship scene in a parking lot.

- Hybrid sets: Physical props are combined with LED walls. A real tree stands in front of a virtual forest. The lighting matches perfectly.

- Real-time AI compositing: Cameras now auto-remove green screens and composite elements live. No post needed.

One studio in Atlanta is testing a system where actors wear haptic suits. When they walk through a virtual storm, they feel wind and rain on their skin. The LED wall shows the rain. The camera captures it. The performance? Unbelievable.

This isn’t about replacing traditional filmmaking. It’s about expanding it. The tools are more powerful. The creativity is wider. And the results? More real than ever.

Comments(7)